Details

Description

Hi,

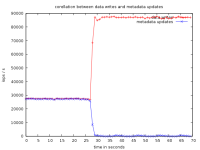

We are currently testing a setup where we have a Solidfire backed ISCSI SR attached to Xenserver and running an I/O intensive load on the VM. With a clean disk, no snapshots, we are seeing about 20K iops which is the max limit on the solidfire. But as soon as we take the snapshot of a VM, the throughput goes to around 10K iops which is about 50% degradation. Clearly shuch a drastic degradation is not very good. I suspect this is due to the fact that

Xen creates a VDI chain for a snapshot and every read/write operation has to be checked to which VDI in the chain it should go to and it slows the whole process.

However, even after we delete the snapshot, we still see the VDI chain is present in the SR. We tried manually GCing by doing an sr-scan and also running the cleanup.py script but the snapshot VDI and the base VDI still remain.

There are two questions that I want to address:

1) Is such a performance degradation expected? Do we know any workarounds to migitate this?

2) Is there a way to manually force a coalace of the VDI if the snapshot is deleted.

Attachments

Issue Links

- links to