Details

-

Bug

-

Resolution: Unresolved

-

Major

-

None

-

7.2

-

None

-

10st Dell PowerEdge R630 with 2st Intel Xeon E5-2630 v3, 256Gb ram, Intel X520 10G Dualport NIC, Perc H730p raid, 2st 300Gb 15K 12Gbit SAS-drives in raid1 running XenServer 7.1.

For storage we have 2st Nexentastor machines serving 2st NFS shares. The Nexentastor machines are 2st Dell R730xd with the same hardware as our R630's except they have 2st Intel X520 10Gb Dualport NIC's which are aggregated with LACP.

The ZFS-configuration is a RAIDZ-Mirror which is comparable with a RAID10.

We have 14st 1.8Tb 10K 12Gbit SAS-drives in this array, 2st SanDisk 200Gb SSD's for SLOG and 1st SanDisk 200Gb SSD for L2ARC.The switches used are 4st Juniper EX4600-32F which are stacked in 2 pairs. 5 servers are using one pair and the other 5 servers are using the other pair.

10st Dell PowerEdge R630 with 2st Intel Xeon E5-2630 v3, 256Gb ram, Intel X520 10G Dualport NIC, Perc H730p raid, 2st 300Gb 15K 12Gbit SAS-drives in raid1 running XenServer 7.1. For storage we have 2st Nexentastor machines serving 2st NFS shares. The Nexentastor machines are 2st Dell R730xd with the same hardware as our R630's except they have 2st Intel X520 10Gb Dualport NIC's which are aggregated with LACP. The ZFS-configuration is a RAIDZ-Mirror which is comparable with a RAID10. We have 14st 1.8Tb 10K 12Gbit SAS-drives in this array, 2st SanDisk 200Gb SSD's for SLOG and 1st SanDisk 200Gb SSD for L2ARC. The switches used are 4st Juniper EX4600-32F which are stacked in 2 pairs. 5 servers are using one pair and the other 5 servers are using the other pair.

Description

After running 6.5 SP1 for about 2 years we decided to upgrade our production enviroment to 7.1 (I wasnt able to chose that version when reporting this issue) mainly because of performance improvements.

That includes disk I/O, network I/O but also to gain advantage of the improved vm-export mechanism which should be alteast 2x faster.

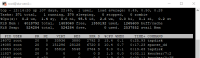

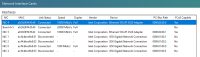

After installing the machines we created 2 pools, 5 servers in each. All the hosts have their 2 ports in a active/active LACP where the mgmt also resides. The MGMT vlan is configured as native-vlanid on the switches and everything is working out fine.

On top of this we have created a VLAN for NFS-storage and assigned an IP, then we attached the NFS SR without any mount-options.

We also created about 30 other VLAN's without IP's for our VM's.

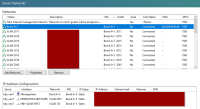

Initial tests showed that we can read and write in a VM at about 900MB/s against our NFS SR which I consider are good over 10Gb.

Also, migrating a VM within the pool shows about the same bandwidth peaks, migrating 20-30 big VM's (All from 4Gb ram to 24Gb ram) is really fast and the peaks are even higher, I can see maximum 1100MB/s.

But that is about it, the fun is over, doing a live VM storage-migration from host-1A in pool-A to host-1B in pool-B hows only 60MB/s over the mgmt-interface.

I then created a new VLAN called XEN-STORAGEMIGRATION in both pools, after doing that I created a new mgmt-interface (Like I did for the NFS-storage network) on all hosts in both pools within the same /24 (no routing) and tried to use that interface for storage-migration between the pools and guess what, performance was bumped from ~60MB/s to ~160MB/

I then tried using the regular mgmt-interface again and it is maxing out at about ~60MB/s. To be sure it wasnt some temporarily magic I tried the XEN-STORAGEMIGRATION interface again and I max it out at about ~160MB/s again....

Obviously there is some kind of limitation on the mgmt-interface and the bond0 since the mgmt is sitting on top of it, I cant be alone to notice this.

I've got a Intel I350 1Gb quadport in all the machines which I moved the mgmt-interface to, but that just comes down to really really slow xen-motion within the pool since it uses the mgmt-interface per default.

And guess what interface is used when doing backups? Per default the XAPI reports the mgmt-interface's IP which means all VM-backups are done over mgmt-interface and is maxed out at ~160MB/s.

I really do hope this is a bug in someones brain because this isnt enterprise at all, nobody will pay for XenServer if it isnt even able to max out 10Gb.

//Niklas